tsla forecast

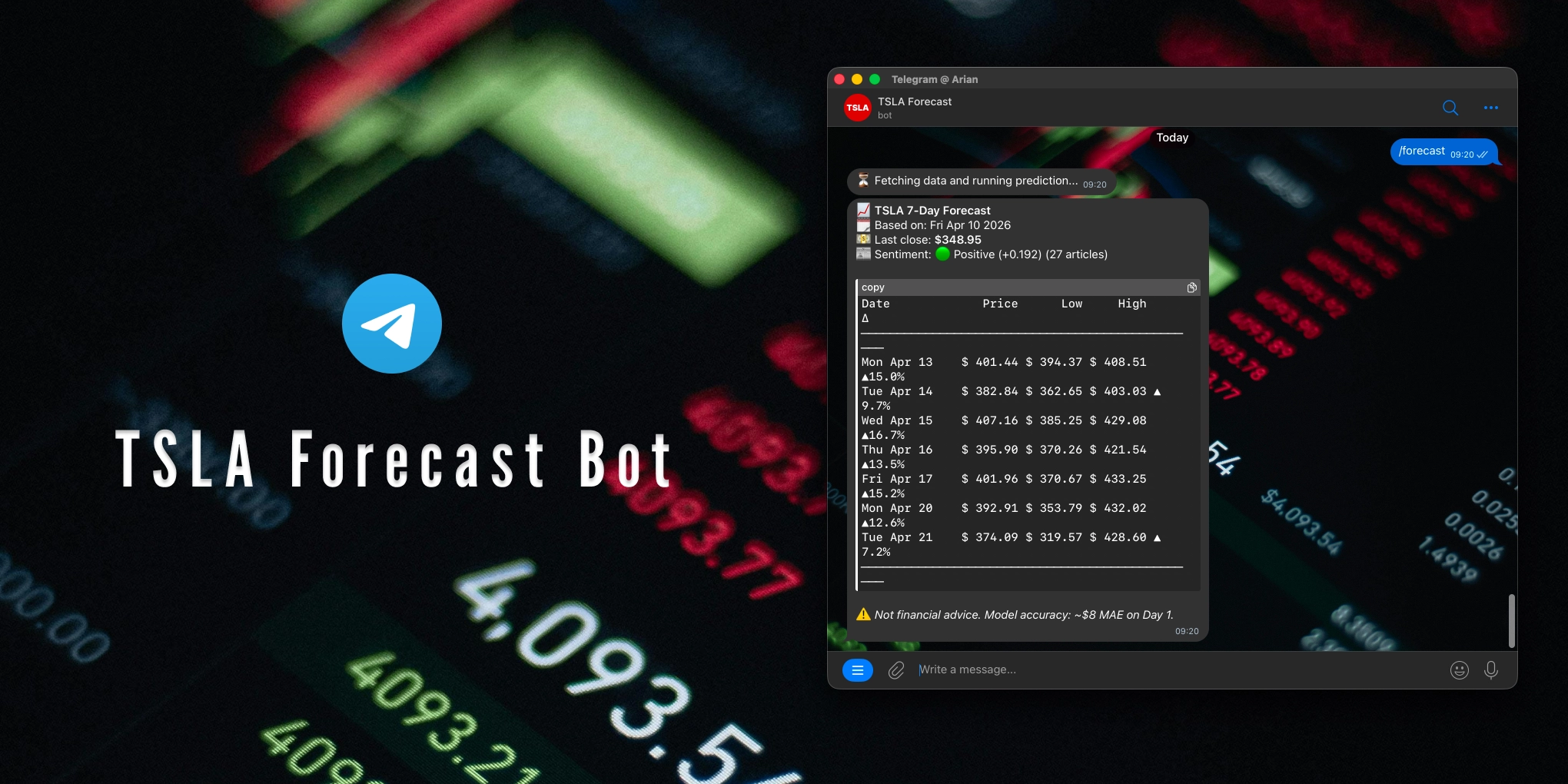

a live Telegram bot for forecasting TSLA stock using a Bidirectional LSTM, sentiment analysis, and 17 engineered features, deployed on AWS EC2

A real-time TSLA stock forecasting system built on a Bidirectional LSTM. The model ingests 17 engineered features including macro indicators and VADER sentiment analysis. Forecasts are surfaced through a Telegram bot deployed on AWS EC2.

disclaimer: this project is for educational purposes only and does not constitute financial advice. do not use forecast outputs to make investment decisions.

Key Features

- Bidirectional LSTM model trained on 15 years of TSLA historical data

- 17 engineered features (OHLCV, technical indicators, macro data, sentiment, earnings calendar)

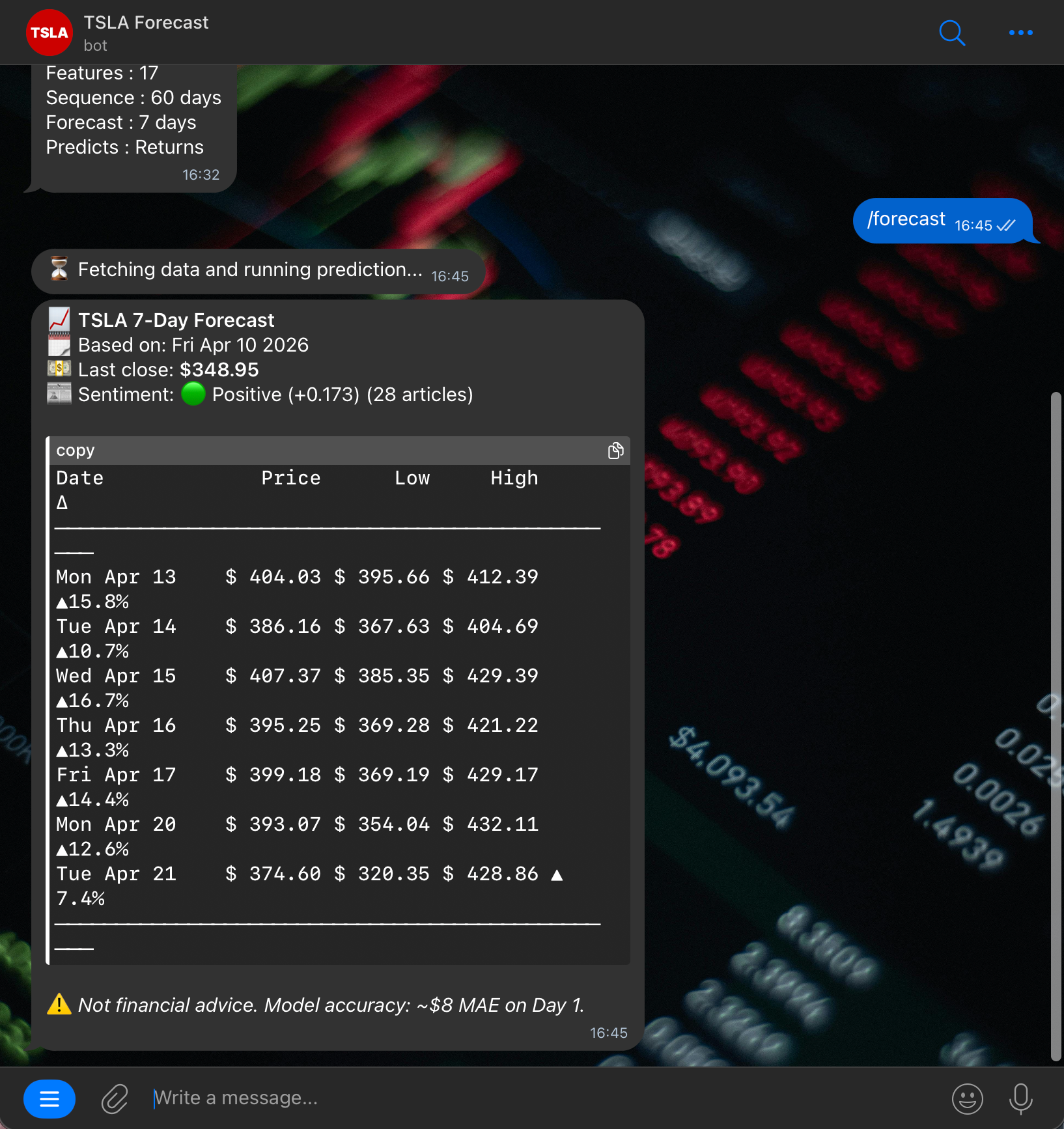

- Live news sentiment via NewsAPI + VADER sentiment analysis

- Earnings awareness (days until next TSLA earnings)

- Macro context (NASDAQ, Dollar Index, 10Y Treasury, Oil prices)

- Seven-day price forecast predictions

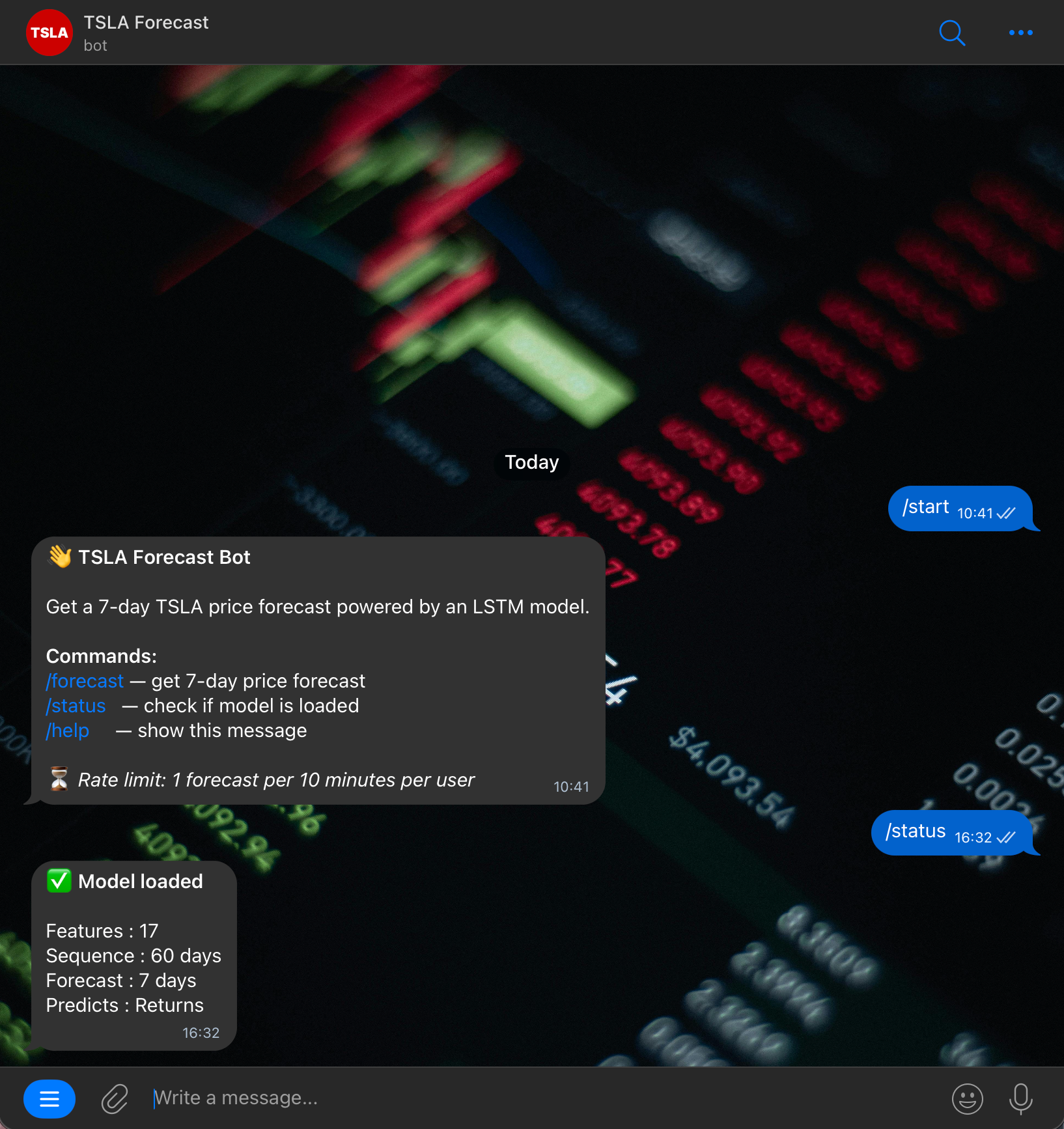

- Rate-limited Telegram bot (1 request per 10 minutes per user)

- AWS EC2 deployment with persistent runtime

tech stack

# model

python 3.11 | tensorflow 2.16 | scikit-learn | pandas | numpy# sentiment

vadersentiment | newsapi# infrastructure

aws ec2 | telegram bot api | yfinancefeature engineering

the model is trained on 17 features across four groups.

# Price & Volume (OHLCV)

open | high | low | close | volume

# Technical Indicators

rsi | macd | bollinger bands | moving averages | volatility

# Macro Indicators

nasdaq (^ixic) | dxy (dx=f) | tnx | oil (cl=f)

# Sentiment

vader compound score (daily news headlines)

# Calendar

days until next TSLA earningshighlights

a few screenshots from the bot, showcasing the forecast output and interface.

building the tsla forecast bot pushed the data pipeline to be just as critical as the model itself. yfinance can be inconsistent, macro tickers have gaps, and NewsAPI has a daily request limit, so defensive data handling became as important as the architecture.

deploying on EC2 also meant thinking beyond notebooks. managing a persistent process, handling crashes gracefully, and retraining the model directly on the instance to resolve TensorFlow version mismatches were all challenges that shaped the final design.